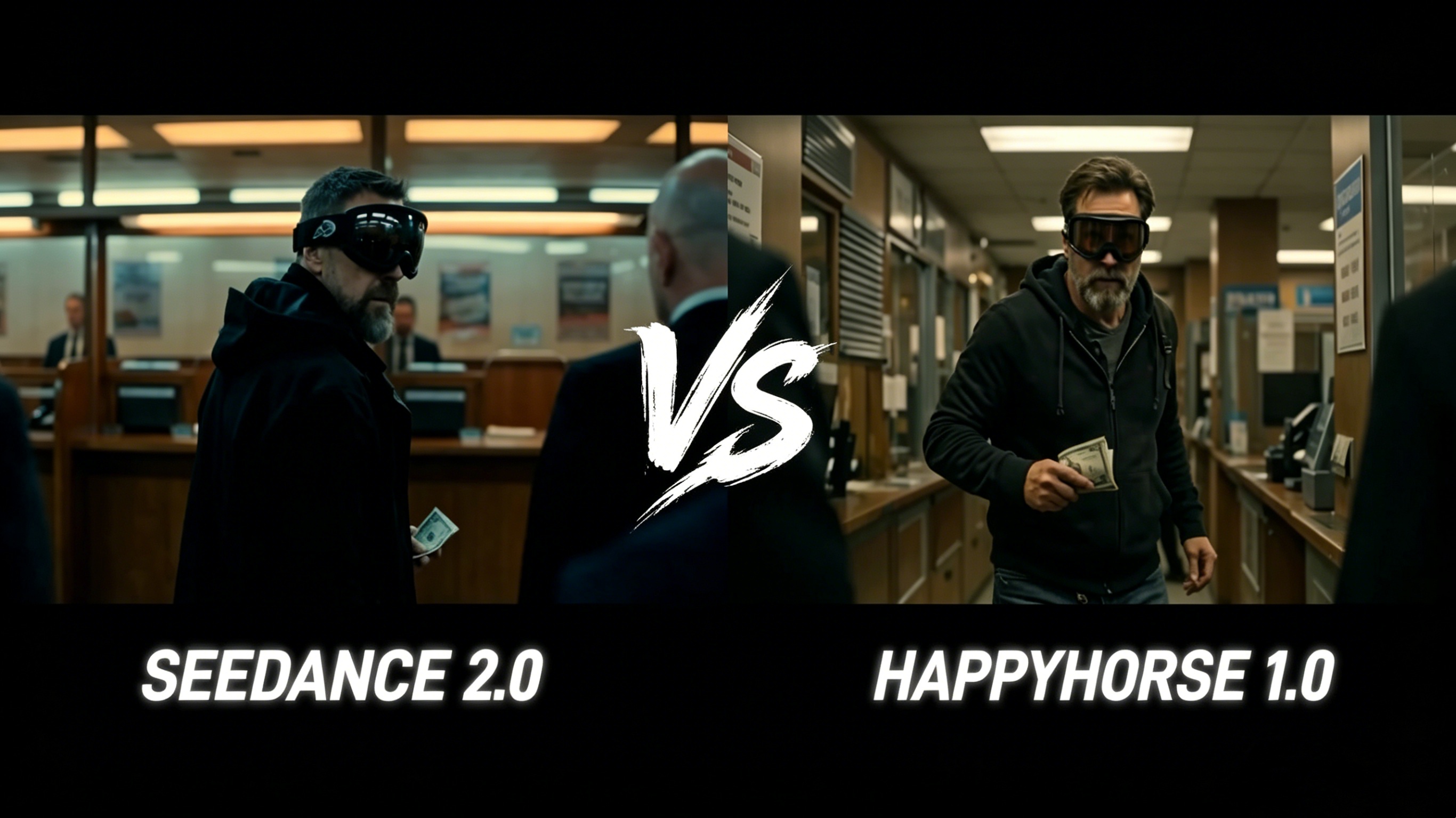

The AI video generation landscape shifted dramatically in April 2026. Alibaba quietly released Happy Horse 1.0, a 15-billion-parameter open-source model that immediately topped the Artificial Analysis Video Arena leaderboard — outranking ByteDance’s highly anticipated Seedance 2.0 by more than 100 Elo points. For content creators, marketers, and filmmakers, the big question is no longer just “which model should I try?” but “which model is the right tool for my specific project?” This guide breaks down the Happy Horse 1.0 vs Seedance 2.0 comparison across every dimension that matters, so you can make an informed decision before you generate your next video.

What Are These Two Models?

Happy Horse 1.0 — Alibaba’s Open-Source Challenger

Happy Horse 1.0 is developed by the Future Life Lab at Taotian Group, part of the Alibaba ecosystem, led by Zhang Di — the architect behind Kuaishou’s Kling 1.0 and 2.0 video generation models. It surfaced as a mystery model on the Artificial Analysis Video Arena around April 7, 2026, without revealing its origin, and quickly climbed to the top of blind-test rankings for both text-to-video (Elo 1,341) and image-to-video (Elo 1,402) generation. The reveal surprised the industry: Happy Horse 1.0 is not just competitive — it is fully open-source, including the base model, distilled model, super-resolution module, and inference code, all available for commercial use and self-hosting.

Seedance 2.0 — ByteDance’s Multimodal Powerhouse

Seedance 2.0 is a native multimodal audio-video generation model developed by ByteDance, officially released in China in early February 2026 and globally launched in April 2026. It represents a major architectural leap over Seedance 1.0 and 1.5 Pro, adopting a unified, large-scale architecture designed for multi-modal audio-video joint generation. It supports four input modalities — text, image, audio, and video — and allows users to upload up to 12 assets (9 images, 3 video clips, and 3 audio files) in a single generation session.

Architecture: How the Two Models Are Built

Happy Horse 1.0 Architecture

Happy Horse 1.0 is built on a 40-layer unified self-attention Transformer — a fundamentally different design choice compared to most video generation models. Rather than using cross-attention for text conditioning and a separate module for audio, the model processes text, image, video, and audio tokens in a single unified sequence. The architecture uses a “sandwich” layout: the first 4 and last 4 layers handle modality-specific embedding and decoding, while the 32 middle layers share parameters across all modalities. This design results in exceptional parameter efficiency, where most of the network performs cross-modal reasoning rather than operating in siloed sub-networks.

DMD-2 distillation further reduces the denoising process to just 8 steps without classifier-free guidance, and combined with MagiCompiler accelerated inference, the model produces a 5-second video at 256p in approximately 2 seconds, and a full 1080p video in roughly 38 seconds on an NVIDIA H100.

Seedance 2.0 Architecture

Seedance 2.0 is built on a unified, highly efficient, large-scale architecture for multi-modal audio-video joint generation. According to its technical paper (arXiv:2604.14148), the model delivers substantial improvements across all key sub-dimensions of video and audio generation. It supports native output resolutions of 480p and 720p, with video durations ranging from 4 to 15 seconds. The model uses a Dual-branch approach to solve audio-visual synchronization, generating synchronized dialogue with precise lip-sync, ambient soundscapes, and music that follows narrative rhythm — all in a single forward pass.

Key architectural difference: Happy Horse 1.0 uses a single-stream unified self-attention Transformer; Seedance 2.0 uses a unified but distinct multi-branch architecture. Happy Horse achieves higher resolution natively (1080p), while Seedance 2.0 currently caps at 720p natively.

Video Quality and Resolution

Happy Horse 1.0 Video Quality

Happy Horse 1.0 generates native 1080p video with rich color grading, accurate lighting, and film-grade detail. In the Artificial Analysis Video Arena’s blind human preference tests (with over 3,000 votes), it outperformed Seedance 2.0, Kling 3.0, Google Veo 3.1, and PixVerse V6. Reviewers consistently highlight its remarkably fluid and natural motion — from subtle facial expressions to complex full-body movements — as well as its physical realism. The model handles complex scenes, including multi-agent interactions and realistic physics, with exceptional stability.

Seedance 2.0 Video Quality

Seedance 2.0 delivers cinematic-quality output with exceptional motion stability. It is specifically optimized for multi-shot narrative coherence, meaning characters, visual style, and atmosphere remain perfectly consistent across complex shot transitions and temporal shifts. Users can control camera angles, transitions, and timing down to the individual frame. The model handles a wide range of sophisticated styles, including photorealism, cinematic looks, and specialized aesthetics. Its physical simulation — covering fabric flow, character interaction with environments, water, smoke, and particle effects — is grounded in real-world physics.

Edge in resolution: Happy Horse 1.0 (native 1080p vs 720p for Seedance 2.0). Edge in multi-shot narrative control: Seedance 2.0, which was architecturally designed for multi-shot storytelling from the ground up.

Audio Capabilities: Native vs Post-Production

Happy Horse 1.0 Audio

Happy Horse 1.0 delivers phoneme-level accurate lip-sync in 7 languages: English, Mandarin Chinese (including Cantonese), Japanese, Korean, German, and French. Its Dual-Branch DiT generates video and audio in a single pass — dialogue, ambient sounds, and Foley effects are natively synchronized, requiring zero post-production dubbing. This is a major practical advantage for creators working across multilingual content or international campaigns.

Seedance 2.0 Audio

Seedance 2.0 also generates audio natively alongside video in a single pass. It produces synchronized dialogue with precise lip-sync, ambient soundscapes, and music that follows the narrative rhythm. Users can upload audio files (up to 3, each up to 15 seconds) to sync video to uploaded audio or music beats — an important feature for dance, music video, and rhythm-driven content. The model can also auto-generate context-aware sound effects and background music based on the visual content.

Edge in multilingual lip-sync: Happy Horse 1.0, with ultra-low Word Error Rate across 7 languages. Edge in audio-to-video synchronization: Seedance 2.0, which allows audio uploads to drive beat-synced generation.

Input Flexibility and Creative Control

Happy Horse 1.0 Inputs

Happy Horse 1.0 accepts text prompts and image inputs, with support for image-to-video generation. Its prompt adherence is extremely strong, accurately translating complex scene descriptions — including multi-agent interactions, camera movements, lighting directions, and motion styles — into output that reflects what was described. The model is accessible via browser on any device and integrates via RESTful API with sub-10-second generation for shorter clips.

Seedance 2.0 Inputs

Seedance 2.0 is the clear leader in raw input flexibility. Users can combine up to 9 images, 3 video clips (15 seconds each), and 3 audio files in a single generation. The model uses a natural language reference system — users simply describe what they want to reference in natural language (e.g., “reference @Video1 for walking motion and camera angle”), and the model understands. This allows precise reference of motion, camera movements, visual effects, characters, scenes, and sounds. Perfect character consistency is maintained for faces, clothing, text overlays, and visual styles across the entire output.

Edge in input modality depth: Seedance 2.0 — unmatched multi-asset referencing capability. Edge in ease of use: Happy Horse 1.0 — simpler interface, equally strong prompt adherence.

Generation Speed

Happy Horse 1.0 is the fastest model in this comparison. With DMD-2 distillation (8 denoising steps) and MagiCompiler inference, it achieves approximately 2 seconds for a 5-second 256p clip and 38 seconds for a full 1080p video on H100 hardware. Seedance 2.0 completes generations in under 2 minutes, with fast-tier endpoints offering lower latency for production workloads. For rapid iteration and high-volume content pipelines, Happy Horse 1.0 holds a clear speed advantage.

Open Source vs Closed Ecosystem

This is one of the most significant practical differences between the two models. Happy Horse 1.0 is fully open-source — base model, distilled model, super-resolution module, and inference code are all publicly available with commercial usage rights. Teams can self-host, fine-tune on custom datasets, and integrate directly into existing infrastructure. Seedance 2.0 is a proprietary closed model, accessible via ByteDance’s Dreamina platform, via API through third-party providers, or through platforms that have licensed the model.

For enterprise teams with specific compliance requirements, researchers who need to fine-tune, or studios building custom pipelines, the open-source nature of Happy Horse 1.0 is a significant advantage. For teams that prefer a managed, cloud-hosted solution with a richer creative toolset, Seedance 2.0’s ecosystem is more mature.

Which Model Should You Use?

Choose Happy Horse 1.0 if you:

Need native 1080p resolution output

Work with multilingual content requiring accurate lip-sync across 7 languages

Want to self-host or fine-tune the model on your own infrastructure

Prioritize raw generation speed for high-volume workflows

Need the highest-performing model according to current blind benchmark rankings

Choose Seedance 2.0 if you:

Need advanced multi-asset referencing (combining images, videos, and audio in one generation)

Are creating multi-shot narrative videos with complex character consistency requirements

Want beat-synced audio generation for dance or music content

Prefer a managed cloud solution with a mature creative platform

Are working on projects that benefit from frame-level creative control

Final Verdict: Happy Horse 1.0 vs Seedance 2.0

Both models represent the cutting edge of AI video generation in 2026, and the Happy Horse 1.0 vs Seedance 2.0 competition is genuinely close. Happy Horse 1.0 leads on benchmark performance, native resolution, speed, and open-source flexibility. Seedance 2.0 leads on input richness, multi-shot storytelling, and creative reference control. For most solo creators and small teams, Happy Horse 1.0’s combination of quality and speed is hard to beat. For studios and professional productions requiring complex multi-asset workflows, Seedance 2.0’s depth of creative control makes it the stronger choice.

The best approach? Test both. Seedance 2.0 is available right now — bring your creative vision and see what the model can do.