Will ByteDance Surpass Google? Seedance 2.0 Defines New Heights for Industrial-Grade AI Audio-Visual Generation

Not long ago, Google DeepMind CEO Demis Hassabis offered a jolting assessment in an interview: ByteDance is only about six months behind top-tier companies like Google. Coming from a tech leader as traditionally restrained as Demis, this was not just recognition—it was a signal of urgency.

This week, with the rapid-fire release of Seedance 2.0, Seedream 5.0 Lite, and Doubao Large Model 2.0, creators worldwide are revisiting that assertion. Some industry observers now believe that in the field of video generation, this gap may have narrowed to just 1–2 months. The explosion of Seedance 2.0 signifies that AI video has officially crossed the threshold, moving from its "childhood" into the era of industrial production.

Core Capabilities: Why is Seedance 2.0 Achieving a "Stunning" Consensus?

While benchmarks remain important, what truly moved creators to reconsider their stance is the model’s deterministic performance under complex logic.

1. The "Newtonian" Return of Physical Laws

Seedance 2.0 demonstrates near-perfect understanding of physical logic. Whether it is the dynamic evolution of marble textures, the fluid details of a liquid, or the subtle micro-expressions of a character under specific emotions (such as swallowing or a cautious glance), Seedance 2.0 is no longer a simple stack of pixels. It has constructed a profound "World Model."

2. Industrial-Grade Instruction Following: Farewell to "Selective Hallucinations"

In Seedance 2.0, creators can direct the camera with precision using natural language:

Complex Script Restoration: Even when faced with extremely long prompts containing intricate cinematic logic, the model achieves precise understanding and restoration, eliminating the "partial neglect" common in previous models.

Silky Transitions: Whether it is a cross-style scene transition or a long shot switching from third-person to a subjective POV, the fluidity of the cinematic language has reached professional film industry standards.

3. Audio-Visual Synergy: The Power of Native Multimodality

Unlike "plug-in" sound effects, Seedance 2.0 is built on a Native Multimodal Architecture. The model’s "brain" can simultaneously understand the visual rhythm and generate matching ambient sounds, dialogue, and foley, achieving true audio-visual integration and significantly enhancing narrative integrity.

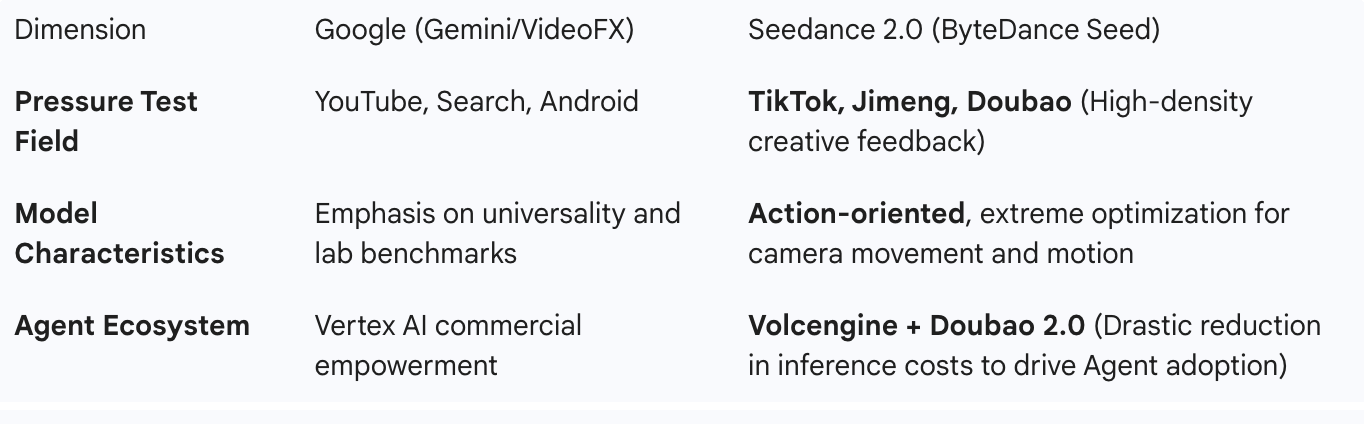

Strategic Comparison: ByteDance’s "Google-Level" Closed Loop

ByteDance is building a "Model-Application-Cloud" trinity system highly similar to Google’s:

As OpenAI’s Chief Product Officer once said, the best products come from deep research, and deep research requires massive iterative feedback. Through its vast application ecosystem, ByteDance is running a unique model evolution curve.

Industry Consensus: The Childhood of AI Video is Officially Over

During the week of Seedance 2.0’s release, we witnessed a collective resonance from professional film circles to everyday creators:

Director Jia Zhangke, after observing the performance of Seedance 2.0, has begun discussing the possibility of using it for short film projects.

Feng Ji, producer of Black Myth: Wukong, remarked: "The childhood of AI is over."

This consensus marks that AI video is now fully ready for professional use. Whether it is creating animated shorts in the style of Goose Mountain or cinematic transition effects, Seedance 2.0 has pushed technology that was once hovering at the threshold into a full-scale breakout.

Enjoy the Dividends of Technological Innovation

When high capability meets lower costs, transformation has already occurred. Seedance 2.0 is not just a tool; it is a professional director's workstation that allows imagination to achieve "precise industrial landing."

Seedance 2.0 is now live on the official website.

Now, please begin your directorial journey.